He is actively exploring the practical applications of LLMs, particularly for building personal knowledge bases and enhancing coding workflows, while also experimenting with and tuning small-scale models like nanochat.

Recent Activity

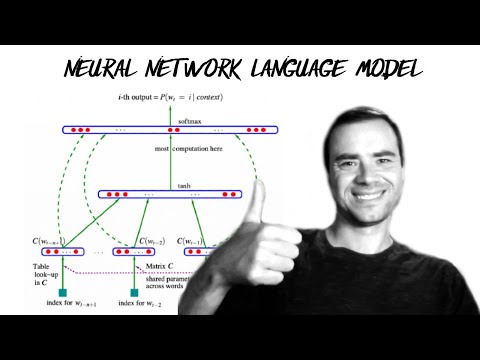

Building makemore Part 2: MLP

Andrej Karpathy

Highlights: This video demonstrates building a multilayer perceptron (MLP) for character-level language modeling, covering essential ML fundamentals like training, hyperparameter tuning, and evaluation. It provides practical insights into handling train/dev/test splits and diagnosing under/overfitting in neural networks.

Worth watching: It's worth watching for hands-on implementation of MLPs with clear explanations of core machine learning concepts, making it accessible for both beginners and practitioners looking to solidify their understanding.

Building makemore Part 3: Activations & Gradients, BatchNorm

Andrej Karpathy

Highlights: This video examines the statistical challenges in training deep neural networks, focusing on how improperly scaled activations and gradients can cause instability. It introduces Batch Normalization as a key technique to stabilize training by normalizing layer inputs.

Worth watching: Worth watching for its practical insights into diagnosing and fixing common deep learning training issues, presented by an expert with clear visualizations of internal network behavior.

Building makemore Part 4: Becoming a Backprop Ninja

Andrej Karpathy

Highlights: This video demonstrates manual backpropagation through a complete 2-layer MLP with BatchNorm, covering gradients from cross entropy loss through embedding tables. It builds intuitive understanding of gradient flow at the tensor level, beyond scalar implementations like micrograd, while reinforcing core deep learning concepts.

Worth watching: Essential viewing for developers wanting to move beyond autograd black boxes and truly understand gradient computation in neural networks, presented by one of the field's most effective educators.

Building makemore Part 5: Building a WaveNet

Andrej Karpathy

Highlights: This video demonstrates how to evolve a simple 2-layer MLP into a deeper, tree-like neural network architecture that resembles DeepMind's WaveNet (2016). It shows the practical implementation process using PyTorch's torch.nn module while explaining the underlying mechanics of deep learning development.

Worth watching: It's worth watching because it provides a clear, hands-on walkthrough of building a complex neural network from simpler components, offering valuable insights into both PyTorch fundamentals and the architectural thinking behind influential models like WaveNet.

Let's build GPT: from scratch, in code, spelled out.

Andrej Karpathy

Highlights: This video provides a hands-on coding tutorial where Andrej Karpathy builds a GPT model from scratch, implementing the transformer architecture described in 'Attention is All You Need' and connecting it to real-world applications like GPT-2/3 and ChatGPT. It demonstrates the practical implementation of autoregressive language modeling while showing GitHub Copilot (itself a GPT model) assisting in writing the code, creating a meta-learning experience.

Worth watching: It's worth watching because it demystifies complex AI concepts through clear, practical coding examples and connects theoretical papers to real implementations, making advanced transformer architectures accessible to developers and enthusiasts.

![[1hr Talk] Intro to Large Language Models](https://i.ytimg.com/vi/zjkBMFhNj_g/hqdefault.jpg)

[1hr Talk] Intro to Large Language Models

Andrej Karpathy

Highlights: This talk demystifies Large Language Models (LLMs) by explaining them as a new computing paradigm analogous to operating systems, where models like ChatGPT serve as the core technical component. It covers their fundamental workings, future trajectory, and unique security challenges in an accessible way for general audiences.

Worth watching: Andrej Karpathy provides a clear, foundational understanding of LLMs from one of the field's leading educators, making complex concepts accessible while addressing practical implications and security considerations that remain highly relevant.

Let's build the GPT Tokenizer

Andrej Karpathy

Highlights: The video explains that tokenizers are a separate, crucial component in LLMs, using Byte Pair Encoding to translate between text and tokens. It demonstrates building the GPT tokenizer from scratch, highlighting its distinct training process and core encode/decode functions.

Worth watching: Worth watching to understand a fundamental yet often overlooked part of how LLMs process text, presented clearly by an expert in the field.

Let's reproduce GPT-2 (124M)

Andrej Karpathy

Highlights: This video provides a comprehensive, hands-on walkthrough of reproducing the GPT-2 (124M) model from scratch, covering network architecture, training optimization, and hyperparameter tuning based on original papers. It demonstrates the full training pipeline with practical implementation details and concludes with generated text samples to evaluate model performance.

Worth watching: Worth watching for its educational value in understanding transformer-based language model implementation and training optimization, presented by a renowned AI educator with clear, practical demonstrations.

Deep Dive into LLMs like ChatGPT

Andrej Karpathy

Highlights: This video provides a comprehensive overview of how Large Language Models like ChatGPT are developed, covering the full training stack from data collection to deployment. It also offers practical mental models for understanding their 'psychology' and optimizing their use in real-world applications.

Worth watching: Worth watching because Andrej Karpathy, a leading AI researcher, delivers an accessible yet thorough explanation that bridges technical depth with practical application insights, making complex LLM concepts understandable for general audiences.

How I use LLMs

Andrej Karpathy

Highlights: The video provides a practical, example-driven walkthrough of how to effectively use Large Language Models in daily life, covering everything from basic interactions to understanding pricing tiers and model selection. It demystifies the growing LLM ecosystem by showing concrete applications and explaining when to use different models.

Worth watching: Andrej Karpathy's expertise and clear teaching style make complex AI concepts accessible, offering actionable insights for both beginners and experienced users looking to optimize their LLM usage.

@karpathy

Highlights: Karpathy is shifting focus from coding to using LLMs to build and manage personal knowledge bases for research, indicating a move towards knowledge compounding and organization.

Worth reading: It reveals a practical, high-level workflow shift for an AI expert, moving from pure code generation to structured knowledge management using LLMs.

@karpathy

Highlights: Karpathy expresses the dual-nature surprise of LLM capabilities, acknowledging both their advanced and surprisingly limited aspects.

Worth reading: It captures a nuanced, expert perspective on the current state and paradoxical nature of LLM intelligence.

@karpathy

Highlights: Karpathy is experimenting with automated research and fine-tuning processes for a smaller model (nanochat), indicating hands-on work in model optimization.

Worth reading: It shows direct, technical experimentation with automated fine-tuning workflows on specific model architectures.

@karpathy

Highlights: Karpathy is shifting focus from coding to using LLMs to build and manage personal knowledge bases for research, indicating a move towards knowledge compounding and organization.

Worth reading: It reveals a practical, high-level workflow shift for an AI expert, moving from pure code generation to structured knowledge management using LLMs.

@karpathy

Highlights: Karpathy expresses the dual-nature surprise of LLM capabilities, acknowledging both their advanced and surprisingly limited aspects.

Worth reading: It captures a nuanced, expert perspective on the current state and paradoxical nature of LLM intelligence.

@karpathy

Highlights: Karpathy is experimenting with automated research and fine-tuning processes for a smaller model (nanochat), indicating hands-on work in model optimization.

Worth reading: It shows direct, technical experimentation with automated fine-tuning workflows on specific model architectures.

@karpathy

Highlights: LLMs represent a novel form of intelligence that defies simple categorization, exhibiting surprising capabilities alongside significant limitations.

Worth reading: Captures the nuanced, paradoxical nature of current LLM capabilities in a concise observation.

@karpathy

Highlights: Shares practical insights and observations from extensive recent experience using Claude for coding, focusing on workflow improvements.

Worth reading: Provides firsthand experience on integrating LLMs into real-world coding practices.

@karpathy

Highlights: Describes an experiment with automated research (autoresearch) for fine-tuning a model called 'nanochat' over an extended period.

Worth reading: Demonstrates practical application of automated AI research and fine-tuning techniques.

@karpathy

Highlights: English is becoming the primary interface for programming and interacting with AI systems, suggesting a shift toward natural language as a programming paradigm.

Worth reading: It highlights the fundamental shift in how humans will interact with and instruct computational systems.

@karpathy

Highlights: Public understanding of AI capabilities is lagging, partly because many formed opinions based on outdated or limited (free-tier) experiences with models like ChatGPT.

Worth reading: It addresses the perception gap in AI progress, which is crucial for realistic public and professional discourse.

@karpathy

Highlights: Large Language Models represent a novel form of intelligence with surprising capabilities and surprising limitations, defying simple categorization.

Worth reading: It captures the dual-nature and complexity of modern AI systems that experts are grappling to understand.

@karpathy

Highlights: Karpathy is experimenting with personalized software for health tracking, specifically aiming to lower his resting heart rate through a structured approach.

Worth reading: It illustrates the trend towards highly customized, personal software applications driven by individual needs.

@karpathy

Highlights: Karpathy published a review article summarizing key developments and trends in the LLM field for the year 2025.

Worth reading: Provides an expert retrospective on the state of LLM technology from a leading AI researcher.

@karpathy

Highlights: Karpathy's coding workflow has dramatically shifted from mostly manual coding to predominantly using AI agents for code generation, with human input reduced to editing and touch-ups.

Worth reading: It demonstrates a significant, rapid shift in developer productivity and workflow due to advances in LLM coding assistants.